What is Query Fan-Out? & How it is Connected to Google's Thematic Search patent

- Pradhumnya khanayat

- Nov 24, 2025

- 6 min read

For years, the game of SEO was relatively straightforward: target a keyword, write a great page, and hope to rank. We focused on getting one result for one search term.

But today, Google's AI is changing the rules entirely. The search engine no longer treats your query as a single task. It treats it as a puzzle with dozens of pieces. This fundamental change is driven by a hidden process called Query Fan-Out (QFO). Understanding QFO is the single most important step you can take to future-proof your digital strategy.

Defining the Shift: What is Query Fan-Out (QFO)?

The Core Concept: The AI Calls in the Specialists

Imagine you have a complex problem, like "How do I start training for a half-marathon, and what gear do I need?" A traditional search engine would give you one list of results for that exact phrase. You would then have to click around, search again for "half-marathon nutrition," and search again for "running shoe reviews."

QFO fixes this by using a technique called Query Decomposition.

Definition in Simple Terms: QFO is an AI-powered technique where the search engine automatically takes your single, complex search query and immediately breaks it down into multiple, more specific sub-queries (or mini-questions).

The Analogy: Think of QFO as an expert doctor who, upon hearing your symptoms (the query), doesn't just treat the surface issue. Instead, they instantly call in a team of specialists—a sports physiologist, a nutritionist, and a gear expert—to research all angles of your problem at the exact same time.

The Goal: To satisfy your latent intent—the unstated, implied, and likely follow-up questions you would have asked next.

QFO and Google AI Mode

This method is the backbone of Google’s new, conversational search experiences.

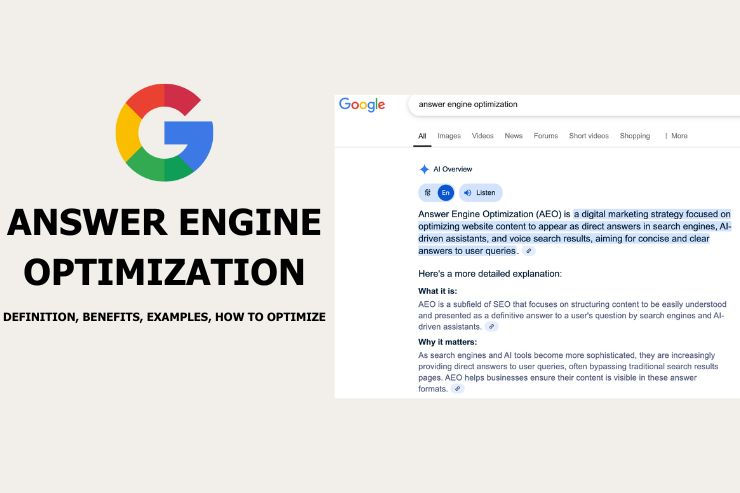

Where You See It: QFO is the engine powering the synthesized, structured answers you see at the top of the search results page: the AI Overviews (AIOs) and the deeper, chat-like AI Mode.

The Shift in Output: Because the AI has researched 10-20 different questions, its answer is no longer a simple link list. It's a rich, summarized response that might include comparison tables, pros and cons lists, and integrated product data from the Shopping Graph. It gives you the full picture in one go, dramatically reducing the need to click multiple links.

QFO Technique and Method: Inside the Algorithmic Black Box

How does the AI, specifically Google's Gemini model, actually perform this fan-out operation? It’s a rapid, multi-step process that combines the power of Large Language Models (LLMs) with traditional search engine infrastructure.

The QFO Technique: Step-by-Step Method

Query Submission & Analysis

You type a complex question. The AI uses advanced Natural Language Processing (NLP) to understand the meaning and intent behind every word, not just the keywords.

Sub-Query Generation (The Fan-Out):

The LLM instantly generates a portfolio of synthetic sub-queries that cover every dimension of your question. For a query like "Best noise-cancelling headphones for travel with long battery life," the AI might generate:

"headphone noise-cancelling performance in flight"

"long battery life headphone reviews 2024"

"comfort comparison over-ear headphones"

"best headphone microphones for remote work"

Parallel Search & Retrieval (RAG)

All these sub-queries are executed simultaneously—in parallel—across Google's entire index, the Knowledge Graph, and other specialized databases (this is the core of the RAG system).

Result Clustering & Ranking

The system now receives potentially millions of document snippets. It scores them using advanced methods:

Cross-Checking

It looks for documents that appear highly relevant across multiple fan-out branches. If one source answers "long battery life" and "comfort comparison," it gets a higher score. This is where authority across the topic wins.

Quality Filtering

It filters aggressively based on E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) and factual accuracy.

Synthesis & Grounding

The LLM takes all the approved, highly-ranked facts and uses them to construct the final answer. Crucially, every single fact in the AI Overview is grounded in one or more of the original source documents, which is why we see citations linked next to the answer.

Why LLMs Use Query Fan-Out

QFO is not an SEO trick; it's an engineering solution to major LLM limitations:

Tackle Ambiguity: QFO is the system’s way of saying, "Let me check all possible interpretations of that vague term before I answer."

Improve Factual Grounding: Instead of trusting its pre-trained memory (which can lead to "hallucinations" or made-up facts), the AI is forced to retrieve evidence for every single claim from the live web. This rigorous cross-checking enhances reliability.

Diversify Perspectives: By fanning out, the AI ensures it considers different source types (product pages, user forum discussions, clinical studies) to provide a truly balanced and comprehensive answer.

The Conceptual Foundation: The Thematic Connection

To truly understand how QFO organizes its final output, we can look at Google's own concepts, like the Thematic Search patent.

Thematic Search: This concept describes the structure the search engine uses to organize retrieved information into logical, descriptive themes or categories.

The Key Parallel: QFO is the expansion mechanism (the search), while Thematic Search is the organizational structure (the display). The AI uses QFO to gather facts on "Cost of Living," "Neighborhoods," and "Leisure Activities" for a city query, and then it presents those facts grouped under those three clear themes in the AI Overview.

The Key Parallel: Organization by Theme

This explains why AI Overviews are so user-friendly: they anticipate the follow-up steps.

This structure helps the LLM frame the final, synthesized AI Overview, ensuring the response is logically segmented and easy to navigate. It anticipates that a user researching a city will eventually want to know about the cost of housing and the job market, organizing the answer accordingly.

Tools and Analysis for QFO Optimization

There's a Strategic Shift from Keyword Targeting to Intent Coverage and after almost a decade, our job is changing. We must move from being Keyword Matchers to Intent Dominators.

The challenge is critical: you write one page hoping to rank for one keyword, but the AI is judging your content's relevance across dozens of its self-generated sub-queries. The contest is now for passage-level relevance across that entire hidden intent ecosystem.

Query Fan-Out Coverage Analysis: The New Research

Since the AI's internal sub-queries are hidden from public tools like Google Search Console, we must reverse-engineer the fan-out process.

Definition: This crucial analysis involves mapping a target query to its complete fan-out sub-queries (the full user journey) and then critically assessing how completely your content addresses each generated branch.

Actionable Analysis Steps:

Identify Sub-Query Clusters: Generate the full fan-out list for your target query (see 4.3).

Map Existing Content: Go through your page section by section. Check which sub-queries are answered explicitly by specific headings, paragraphs, and lists.

Identify Gaps: Find the branches where your content is silent, vague, or provides only implicit answers. These "content gaps" are the specific pieces of information the AI will pull from your competitors, preventing you from dominating the AIO citation space.

Query Fan-Out Generators and Tools

We can simulate the AI's reasoning using new methods:

Manual/AI Prompting: This is the cheapest and fastest way to start. Use an LLM like Gemini or a similar tool and prompt it: "Expand this query ['Your Main Query'] into 10 related sub-queries or user intents, categorized by search intent." This gives you the initial blueprint for your topic map.

SEO Tools: Specialized software is emerging that clusters People Also Ask sections, related questions, and competitive SERP features to mimic QFO behavior.

Goal: These tools provide a clear map of the entire topical universe, enabling us to build semantically complete content that satisfies the AI's complex information needs.

Strategic Optimization Tactics for the AI Era

These final tactics are essential for capturing visibility in AI-generated answers.

Comprehensive Topical Authority

The Principle: The AI is looking for deep, systemic trust. It needs to know that your site covers the entire subject with depth and accuracy before it will confidently cite you across multiple QFO branches.

Actionable Tactic: Build deep Topic Clusters. Create a strong "pillar" page for the broad topic, supported by multiple, contextually linked "cluster" pages that exhaustively cover the specific sub-queries (the fan-out branches).

Structure for Retrieval (Chunking)

The Principle: The AI needs to easily and confidently extract short, factual answers from your page. This process is known as chunking.

Actionable Tactics:

Use clear, declarative headings ($\text{H}2, \text{H}3$) that directly state the sub-query's answer (e.g., "Best for Battery Life: Model X").

Ensure the answer to that heading's question is in the immediate first sentence of the section.

Utilize structured formats like bulleted lists, numbered steps, and comparison tables, as these are highly extractable and preferred by generative AI.

Prioritize E-E-A-T and Structured Data

QFO's grounding process heavily prioritizes authoritative sources to ensure quality and reduce hallucination. And to do that, we should follow:

E-E-A-T: Strengthen trust signals (author bylines, credentials, references, and citations of original data).

Structured Data (Schema Markup): Implement $\text{FAQPage}$ and $\text{HowTo}$ schema. This explicitly signals to the AI which content passages answer which specific questions, directly aligning your content with potential synthetic sub-queries and significantly increasing your chances of being a primary citation source.

The Query Fan-Out revolution is not a threat; it is the natural evolution of search. For experienced SEOs, it is an opportunity to move beyond surface-level keyword hunting and dominate the deep, complex intent that AI is now designed to satisfy.

Comments